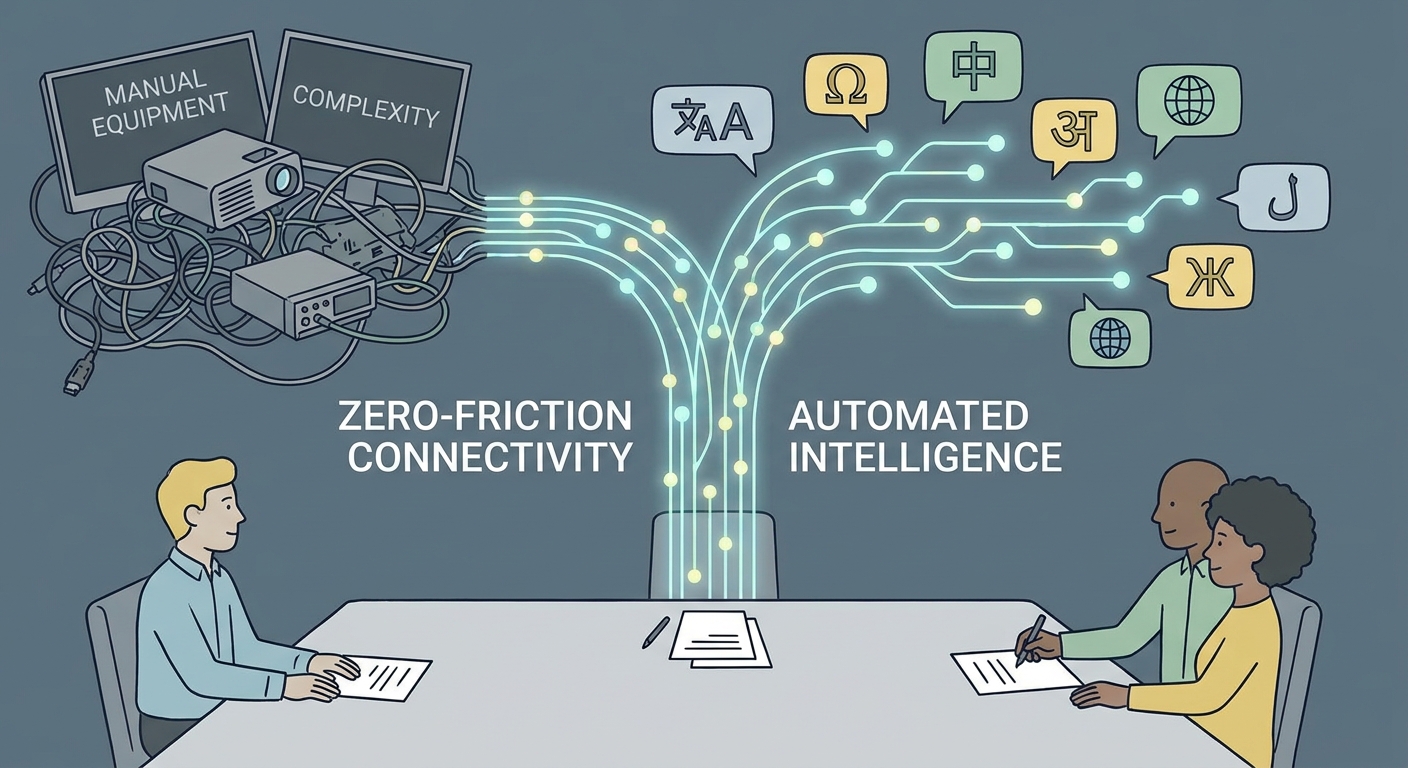

The days of the robotic, stuttering translator are numbered. For years, the enterprise standard for cross-lingual communication has been a clunky, multi-stage relay race. You know the drill: audio is transcribed, translated as text, and then synthesized back into speech. This "cascaded" approach is fundamentally flawed. It introduces agonizing latency, strips away human nuance, and creates a psychological barrier that turns fluid conversations into disjointed, performative exchanges.

As we move through 2026, the industry has reached a pivotal threshold. We are shifting from experimental novelty to what I call "Semantic Fluency." The goal isn't just to convert words anymore; it’s to carry intent, emotion, and context across linguistic divides with near-instantaneous speed.

The Architectural Pivot: Moving Beyond the Bottleneck

To understand why this shift matters, you have to look under the hood of the traditional translation pipeline. In a legacy cascaded system, your voice is captured and sent to an Automatic Speech Recognition (ASR) engine. That engine guesses the text, which is then passed to a separate Machine Translation (MT) model to find the equivalent words in a target language. Finally, a Text-to-Speech (TTS) synthesizer generates the audio.

Every single step in this chain is a setup for failure. If the ASR mishears a technical term, the translation is doomed. If the MT model misses a nuance, the TTS engine speaks it with the wrong inflection. It’s a game of telephone, and the message rarely survives the trip.

End-to-End (E2E) architecture eliminates these silos. Instead of breaking language down into the rigid, brittle structure of text, an E2E system treats speech as a continuous stream of acoustic features. It maps the source audio directly to the target audio in a single, streamlined neural pass. This isn't just a technical optimization; it is a fundamental redesign that allows the model to "hear" the speaker's tone, rhythm, and emphasis, carrying those vital human elements through to the listener. As highlighted in recent Direct Speech-to-Speech Translation Research, this direct mapping is the only way to achieve the sub-second latency required for high-stakes, real-time collaboration.

Latency as the Final Frontier

If you’ve ever sat in a meeting waiting three seconds for an interpreter to "catch up," you know the death of natural rapport. In business, conversation is a dance of turn-taking and non-verbal cues. High latency kills this rhythm. It forces speakers to adopt a stilted, artificial cadence that stifles innovation and kills the deal.

When we talk about real-time speech-to-speech translation, we aren't just talking about speed. We’re talking about preserving the human element. In cascaded systems, the "pause of death" is inevitable because the system must wait for a full sentence or phrase to be transcribed before it can even begin the translation process. E2E models, however, process audio in small, overlapping windows. Translation begins almost immediately. This is the difference between an automated tool and a true communication bridge.

Semantic Understanding: Meaning Over Syntax

The most sophisticated models of 2026 are moving past the literal. A word-for-word translation is often a recipe for disaster in a professional setting. Think about the phrase, "The board moved to dismiss the motion." A system relying on simple text mapping might confuse the corporate "motion" with physical movement.

True semantic translation requires context-aware modeling. It’s not just about what is said; it’s about why it is being said. As noted in the latest AI Speech Translation Trends 2026, the leading platforms are now integrating multi-turn context. The AI remembers what was discussed ten minutes ago to inform its current translation. This deep awareness is what transforms a translation tool into a business asset, ensuring that the intent of a legal argument or a medical diagnosis remains intact regardless of the language barrier.

The "Big Caveat": The Myth of the Universal Translator

There is a dangerous misconception that because AI is "smart," it is universally capable. In the enterprise world, general-purpose models often crash and burn when they hit the specialized vocabulary of a courtroom, a lab, or an operating room. A generic model might be fluent in everyday French or Japanese, but it will inevitably hallucinate or stumble when faced with proprietary technical jargon or unique industry nomenclature.

If your business operates in a high-stakes environment, you cannot rely on "out-of-the-box" solutions. You need industry-specific fine-tuning and custom glossary integration. Without a system that understands the specific lexicon of your field, you are asking a generalist to perform a specialist’s job. If you are struggling to integrate these systems into your specialized workflow, you should contact our team to discuss how we can tailor these models to your specific requirements.

The Future of Work: AI-Human Hybridization

We need to stop viewing "Human-in-the-Loop" as a sign of AI failure. In the highest-stakes environments—international arbitration, emergency medicine, high-level diplomatic briefings—the AI-Human hybrid model is the only responsible way forward.

AI does the heavy lifting. It handles the speed, the massive scale of data, and the real-time transcription of multi-turn dialogues. Human experts provide the final, critical layer of quality assurance and cultural nuance that no machine can fully replicate. This isn't a fallback; it is a strategic choice. By implementing quality assurance frameworks where human interpreters monitor and correct AI-generated streams in real time, organizations can achieve the speed of AI with the legal and ethical accountability of human professionals.

How to Evaluate an S2S Provider: A Checklist

Selecting an S2S provider isn't about reading marketing brochures; it’s about auditing infrastructure. If you are vetting a partner, demand transparency on these three pillars:

- Latency Metrics: Ask for the "End-to-End Latency" in milliseconds. Anything consistently over 500ms will feel sluggish in a live meeting.

- Domain-Specific Accuracy: Can the vendor demonstrate how their model handles your specific business jargon? Ask for a trial using your own historical data or transcripts.

- Data Privacy and Security: Real-time streams are a goldmine of sensitive information. Ensure that your vendor offers localized processing options or strictly compliant, encrypted pipelines that do not train on your private meeting data.

Bridging the Global Gap

The technology to tear down language barriers is no longer on the horizon; it is here. By shifting from the brittle, cascaded pipelines of the past to the fluid, neural architecture of End-to-End translation, enterprises can finally achieve the seamless communication they’ve been promised for a decade.

However, the technology is only as good as its implementation. Success in 2026 requires a pragmatic approach that favors semantic accuracy, minimizes latency, and acknowledges the vital role of human oversight in specialized domains. If your organization is ready to move beyond the limitations of legacy translation and start building truly global, frictionless relationships, explore our translation services to see how we are deploying these advanced systems for the modern enterprise.

Frequently Asked Questions

What is the primary difference between "Cascaded" and "End-to-End" translation?

Cascaded systems rely on a chain of three distinct models (Speech-to-Text, Machine Translation, and Text-to-Speech), which leads to error propagation at every link. End-to-End (E2E) systems use a single neural network to translate directly from source audio to target audio, preserving the speaker's prosody (tone and rhythm) while significantly reducing latency.

Is End-to-End S2S translation accurate enough for professional use?

E2E translation is highly accurate for general conversation, but in high-stakes fields like legal or medical sectors, it should be paired with human-in-the-loop validation. This hybrid approach ensures that industry-specific nuances and technical terminology are handled with the precision required for professional compliance.

How does latency impact real-time speech translation?

Latency creates a "turn-taking" challenge. If there is a delay in the translation, the natural flow of conversation is broken, leading to interruptions and disjointed dialogue. E2E translation solves this by processing audio in small windows rather than waiting for full sentences, enabling near-zero latency that feels like a natural conversation.

Can these systems handle industry-specific jargon?

Generic models often struggle with specialized terminology and may hallucinate or misinterpret technical terms. To handle industry-specific jargon effectively, you must use platforms that support custom glossaries and domain-specific fine-tuning, ensuring the model understands the unique vocabulary of your specific field.