Understanding Multi-Modal Emotion Recognition in Dialogue

TL;DR

- MERC combines text, voice, and vision to interpret human emotions accurately.

- Single-modality AI fails to detect sarcasm and complex emotional context.

- Advanced fusion models dynamically weight inputs for real-time accuracy.

- MERC is essential for building truly intelligent, human-centric interfaces.

We’ve spent the last decade building machines that can calculate, code, and compose. But we’ve largely ignored the most important part of the human experience: the "vibe."

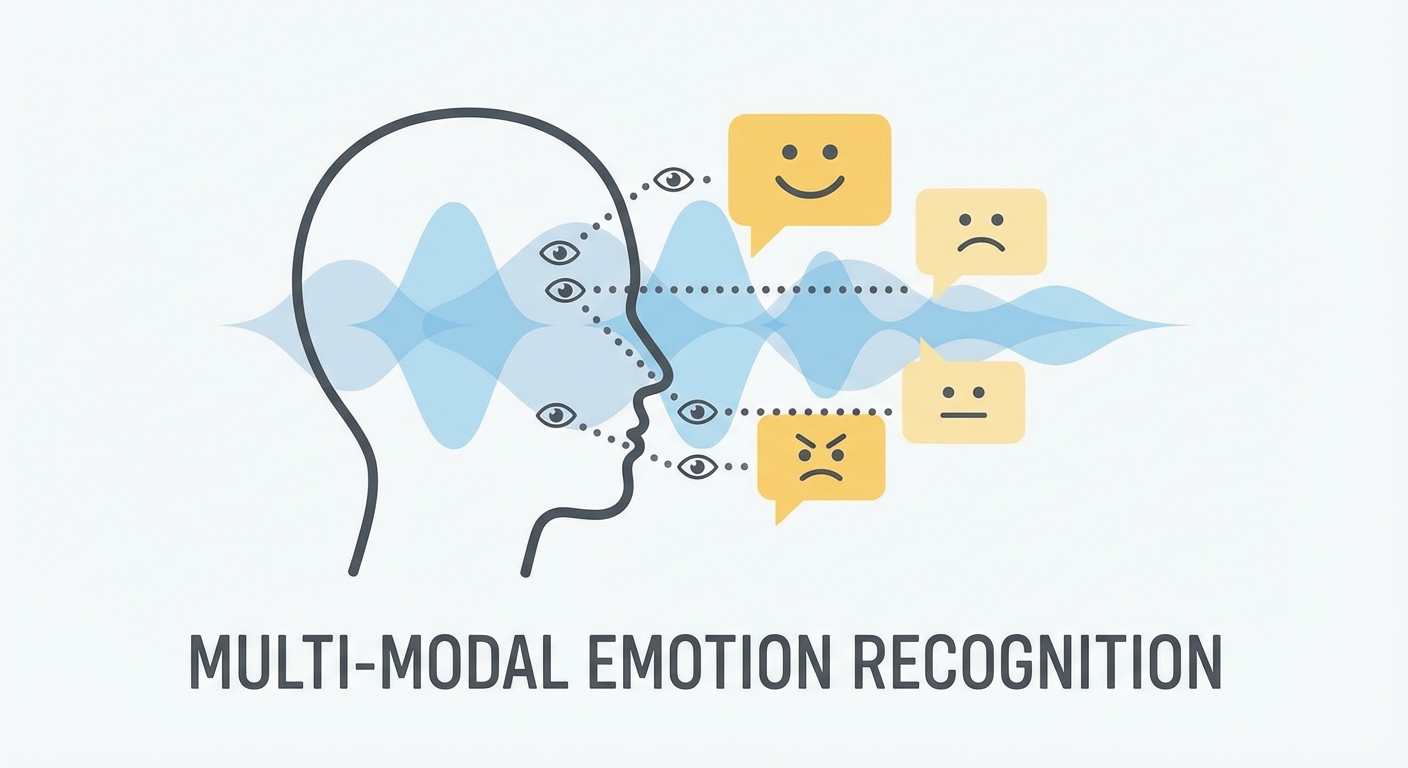

Multi-Modal Emotion Recognition in Conversations (MERC) is changing that. It’s the definitive shift from machines that just crunch data to systems that actually get us. By layering linguistic patterns, the tone of your voice, and the micro-expressions on your face, MERC moves past the shallow "positive vs. negative" binary. It maps the messy, complicated reality of human affect—joy, frustration, skepticism, grief—in real-time. As established in the EMNLP 2025 findings on MERC, we’ve reached a tipping point. The convergence of NLP, computer vision, and speech processing isn't just an academic toy anymore; it’s a functional requirement for any interface that wants to call itself "intelligent."

What is Multi-Modal Emotion Recognition (MERC)?

At its heart, MERC is the art of fusion. It doesn't hunt for a single "smoking gun" to tell it how you’re feeling. Instead, it stitches together disparate data streams. Natural Language Processing (NLP) parses what you’re actually saying. Speech processing models listen for pitch, jitter, and cadence. Computer vision tracks facial landmarks to spot subtle muscular shifts.

The goal? Granular, accurate detection of your state of mind. Traditional sentiment analysis might flag a sentence as "neutral." An MERC system, however, knows the difference between a neutral statement spoken with cold, professional detachment and one delivered with calm, warm engagement. By leveraging recent advancements in Mixture-of-Experts architectures, these systems can weigh the reliability of each input on the fly. If you’re sitting in a dark room, the system knows to stop relying on visual data and double down on your audio and text. It keeps the "emotional signal" clear, even when the environment is working against it.

Why Is Single-Modality Analysis No Longer Enough?

The biggest failure of legacy AI is the "Sarcasm Problem."

Picture this: You’ve been on hold with a customer support bot for forty minutes. You finally get through, and you say, "Great, thanks for the help." A text-only analyzer sees that as "positive." It’s a happy sentence, right? Wrong. It completely misses the context of the wait time and the biting, flat pitch of your voice.

Multi-modal systems solve this by refusing to treat text as the only piece of the puzzle. When the audio encoder hears that sarcastic cadence—or the camera catches an eye-roll—the fusion layer triggers a re-classification. It knows exactly what you mean.

How Do Modern MERC Systems Architectures Work?

We’ve moved toward Mixture-of-Experts (MoE) architectures, and it’s changed everything. In the old days, monolithic models forced every modality through one giant neural network. This usually led to "modality dominance," where one strong signal—like text—would drown out the subtle nuance in your voice or face.

Modern systems use adaptive fusion weights. Think of the system as a traffic controller. It’s constantly checking the signal-to-noise ratio of every input. If your audio is distorted by background noise, the gate mechanism dials back the audio "expert" and leans into the text and visual cues. As documented in recent research on Mixture-of-Experts for Emotion Recognition, this makes for a system that actually works in the real world, not just in a lab.

Deep Dive: The MoE Logic for Developers At the architectural level, MoE in MERC relies on a "gating network" that assigns importance scores to specialized sub-networks, or "experts." Instead of training one massive, bloated model to handle every possible emotional context, we train experts to focus on specific modalities or emotional clusters (e.g., high-arousal vs. low-arousal states). During inference, the gating network picks the experts that provide the highest confidence score for the current dialogue. It’s faster, it’s lighter, and it’s way more accurate.

The Role of Contextual Awareness and Prompt Learning

Emotion doesn't happen in a vacuum. It’s a trajectory. How you feel right now is heavily influenced by what happened ten seconds ago—or ten minutes ago. We’re finally moving away from isolated sentence analysis and toward long-form dialogue history.

By integrating LLM-based prompting, developers can now give the model a "memory." As highlighted in recent Nature research on Prompt Learning in MERC, zero-shot emotion detection is getting a massive boost when you prompt the model to look at the preceding dialogue. We aren't just asking, "Is this sentence angry?" anymore. We’re asking, "Given the user's frustration regarding the previous billing error, does this new statement indicate resolution or continued agitation?" That context turns a static classification task into a dynamic understanding of a human narrative.

What Are the Primary Challenges Facing MERC in 2026?

We’ve made huge leaps, but we aren't at the finish line yet. Three hurdles still loom large:

- The Performance-Confidence Gap: Models often hit 95% accuracy on clean, curated research datasets. In the real world—like a loud call center or a crowded subway—that number can drop to 70%. We need better synthetic data augmentation that mimics the grit of real-world noise.

- Data Scarcity and Cultural Nuance: Emotional expression isn't universal. A smile in one culture might mean joy; in another, it might be a mask for deep discomfort or social deference. Training models that "get" culture is a massive, ongoing challenge.

- Latency: Real-time inference is a tightrope walk. If a model takes 500ms to process your video and audio, the conversation feels robotic and laggy. Making these fusion layers faster is currently the hottest area of R&D.

Real-World Applications: Where is MERC Making an Impact?

MERC is finding a home in any sector where high-stakes human interaction is the norm. For those looking to bridge the gap between tech and human connection, our AI development services focus on tailoring these models to your specific industry needs.

| Industry | Primary Modality Focus | Primary Use Case |

|---|---|---|

| Healthcare | Audio/Text | Detecting early signs of depression or patient distress in telehealth. |

| Customer Experience | Text/Audio | Real-time sentiment routing to human agents for high-frustration calls. |

| Gaming | Vision/Audio | NPCs that react to the player's actual emotional state, not just their actions. |

How Can We Address Ethical Concerns and Data Privacy?

Let’s be clear: biometrics are sensitive. "Privacy-Preserving Emotion AI" isn't a nice-to-have; it’s a non-negotiable requirement. The industry is shifting toward edge-processing, where the inference happens locally on your device. By using federated learning, models can get smarter across a population without ever uploading your raw audio or video to a central server. We want the AI to learn from the nuance of your emotion without it ever needing to "see" you.

Future Outlook: Toward Human-Like, Empathic AI

We’re moving away from an era of reactive AI—the kind that waits for a command—and into an era of proactive, emotionally intelligent agents. Future systems will sense when you’re getting overwhelmed or confused. They’ll adjust their tone, their pacing, and their vocabulary to de-escalate the situation before you even have to ask.

The trajectory is clear. The most successful AI of the next decade won’t be the one with the most data—it’ll be the one that best understands the human experience. If you’re ready to see how these technologies can actually move the needle for your business, contact our team for bespoke AI solutions.

Frequently Asked Questions

What is the difference between sentiment analysis and emotion recognition?

Sentiment analysis is a surface-level classification, typically binary (positive, negative) or trinary (positive, negative, neutral). Emotion recognition is granular and multidimensional; it attempts to identify specific affective states like joy, fear, anger, disgust, or surprise, providing a deeper understanding of the user's psychological state.

Why does multi-modal emotion recognition fail in real-world settings?

Failure in the wild is usually caused by "distribution shift." Models trained in quiet, controlled labs struggle when introduced to background noise, varying microphone quality, or poor lighting. Additionally, real-world conversations are often messy and lack the clear emotional cues found in curated datasets, leading to lower confidence scores in production.

Is it possible to detect emotion while maintaining user privacy?

Yes. Modern implementations utilize edge-processing, where the AI model runs locally on the user's device. Because the raw data (audio/video) never leaves the device and is not stored in the cloud, the system can provide emotional analysis while adhering to strict privacy regulations and user data protection standards.

Which modality is most important for accurate detection?

There is no "silver bullet" modality. The importance of specific inputs shifts based on the context of the interaction. However, current research suggests that the synergy between text and audio is the strongest driver of performance, as they provide both the semantic "what" and the prosodic "how" of a conversation, which together capture the vast majority of human emotional intent.