The era of robotic, monotone voice synthesis? It’s dead. Buried.

In 2026, the success of an AI-driven app doesn't hinge on whether it can speak. Any machine can make noise. The real test is how convincingly it communicates. Choosing the right text-to-speech (TTS) provider is now a high-stakes chess match where technical architecture, dialectal nuance, and sub-200ms latency collide. Whether you’re building a global customer support agent or a high-end storytelling app, the "voice" you pick is the face of your brand. If it sounds fake, your product feels fake.

Why Your App’s Voice is More Than Just a Feature

We’ve moved lightyears past simple text-to-audio conversion. Today, the gold standard is "conversational prosody"—that elusive ability to nail the rhythmic cadence, the natural hesitations, and the raw emotional undertones of a real human. According to the State of Voice Report 2026, users have a much higher trust threshold for interfaces that mirror their own regional speech patterns. They don't want a "neutral" robot. They want someone who sounds like they belong.

This shift has created the new frontier of "Hyper-Localization." It isn't enough to boast a library of 100 languages anymore. Users are smart. They can smell a synthetic voice trained on generic, broadcast-style data from a mile away. If your target demographic is in Mexico City, a generic Spanish voice trained on Peninsular Spanish data will sound jarringly foreign. It’s like wearing a winter coat to the beach—it just doesn't fit. That mismatch erodes trust in seconds. Your voice is the anchor for your user’s emotional connection to your product. Don't sink it with a bad accent.

How Do Modern Neural TTS Architectures Actually Work?

Ever wonder why some voices feel "alive" while others feel like they’re reading from a teleprompter in a basement? It comes down to the pipeline. Modern synthetic speech treats text not as a string of characters, but as a map of intent.

It starts with linguistic analysis, usually handled via Speech Synthesis Markup Language (SSML). Think of SSML as the "director’s notes" for your AI. It tells the system where to breathe, when to pause for dramatic effect, and which words to punch. This data heads to a Neural Acoustic Model, which predicts how the voice should sound, and finally, a vocoder reconstructs those predictions into a smooth audio waveform.

This is miles ahead of the old-school "concatenated" systems that just stitched together pre-recorded snippets of human speech like a digital Frankenstein. Neural TTS generates audio from scratch. That’s why it can keep a consistent tone over long, complex sentences—provided it was trained on high-fidelity, native-speaker data.

Which TTS Providers Lead the Market in 2026?

The market is split. On one side, you have the high-volume, low-latency utility players. On the other, the high-fidelity, narrative-focused platforms. When you’re shopping for a partner, ignore the "voice count" marketing fluff. A provider with 500 voices is useless if the latency is so high the user starts wondering if the server crashed.

| Provider | Voice Count | Typical Latency | Key Strengths |

|---|---|---|---|

| Global Neural Lab | 120+ | ~120ms | Low-latency, multilingual stability |

| NarrativeGen AI | 45 | ~350ms | High emotional range, long-form content |

| EnterpriseVoice | 30 | ~150ms | On-premise security, custom voice cloning |

| Polyglot Synth | 250+ | ~200ms | Massive language coverage, regional dialects |

Does Your Use Case Require Multilingual or Dialect-Specific Support?

There is a dangerous "vanity metric" in the voice industry: the number of supported languages. Ignore it. A provider might claim 150 languages, but if their prosody is off—or worse, if they suffer from "accent leakage"—your product is doomed.

What’s "accent leakage"? It’s when a model trained primarily in one language tries to speak another, resulting in an uncanny, distracting cadence that sounds like a parody. For AI voice technology to truly transform user experience, you need models trained on native-speaker data. If you’re serving a global audience, prioritize providers that offer native-trained models for your top markets. If you’re building a niche local assistant, a smaller provider with deep, high-quality data in that specific language will beat a massive, generic provider every single time.

The "Anti-Benchmark" Guide: How to Test Voices Yourself

Vendor-provided benchmarks are designed to make them look good, not to show you the truth. Never rely on their marketing demos. Run your own "Anti-Benchmark" tests. Use a script that actually mimics your production environment.

- Latency (The 200ms Wall): In a conversation, the gap between the user stopping and the AI starting needs to be under 200ms. If it’s slower, the flow dies. The psychological illusion of a real conversation vanishes.

- Emotional Nuance: Throw a script at the system with questions, exclamations, and empathetic statements. Does it sound bored when it should sound concerned? Does it sound robotic when it should sound excited?

- The Jargon Test: Feed it your industry-specific acronyms and weird product names. If it butchers them, it’s not ready for prime time.

- Filler Handling: Can the system handle "um," "ah," or other natural stutters? A voice that is too "clean" often sounds fake. Real people use fillers.

What Should You Look for in a TTS API?

Your decision should flow directly from your use case. Are you building a rapid-fire customer support bot? Your needs are totally different from someone creating an AI-narrated audiobook.

If you need extreme personalization, look into tools like the ElevenLabs Voice Cloning Guide. Creating a proprietary brand voice gives you a unique sonic fingerprint that competitors can’t touch. Just remember: with great power comes great maintenance. Managing custom models is a lot more work than just calling a standard API endpoint.

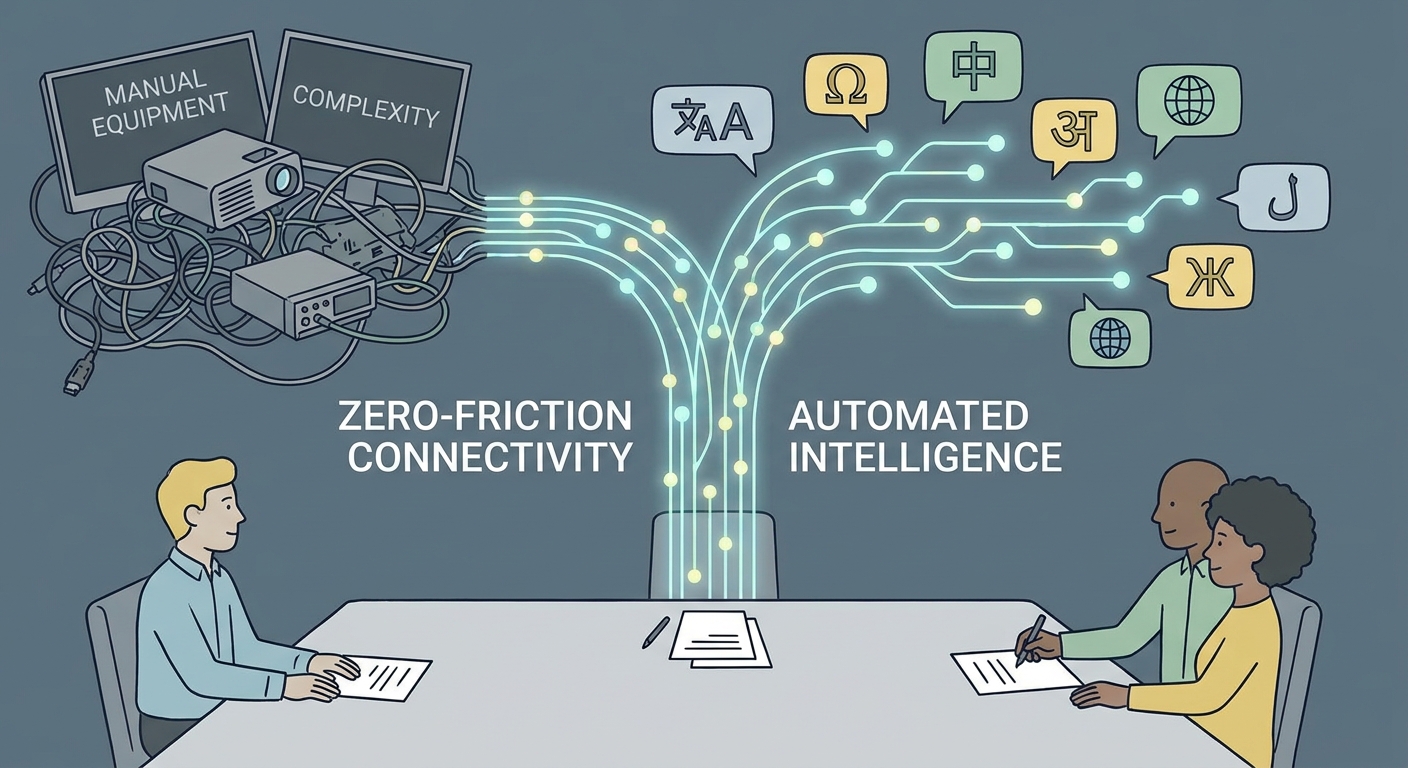

How Can You Future-Proof Your Voice Integration?

The industry is charging toward multimodal, agentic systems—AI that hears, understands context, and acts in real-time. Don't hard-code your voice logic. Use abstraction layers. That way, if a better model comes out next month, you can swap providers without ripping your entire codebase apart.

The goal is to build an architecture that can evolve. If you’re staring at your roadmap and feeling lost on how to weave synthetic voices into your stack, contact our team for custom voice integration. Let’s make sure your AI doesn't just talk—let’s make sure it’s actually heard.

Frequently Asked Questions

How do I ensure my TTS system sounds natural in different languages?

Focus on native-trained models over machine-translated datasets. Systems trained by native speakers capture the unique linguistic rhythm and breathing patterns that generic models miss.

What is the difference between standard and neural TTS voices?

Standard TTS uses concatenated recordings, which feel disjointed and robotic. Neural TTS uses deep learning models to predict human-like rhythms and intonation, resulting in a fluid, conversational output.

Is it possible to use one voice for multiple languages?

Yes, many modern platforms offer multilingual support, but you must watch for accent leakage. Always conduct A/B testing with native speakers of the target languages to ensure the voice doesn't sound like a non-native speaker.

What is the standard for real-time latency in 2026?

Sub-200ms is the standard for conversational agents. Anything slower is perceived as a "laggy" interface, which severely degrades user engagement. For more technical specifications, review the Azure AI Speech FAQ.

Why is SSML important for my TTS strategy?

SSML provides the granular control needed for breaths, pauses, and emotional emphasis. It is the essential tool for turning a generic voice into a brand-specific, expressive character within your application.