Is Voice Cloning Legal?

TL;DR

- Voice cloning is legal only with explicit written consent.

- The NO FAKES Act establishes federal property rights for voices.

- Voices are now legally classified as protected biometric property.

- Unauthorized digital replicas carry heavy federal liability for creators.

- Ethical tools are required to ensure legal consent verification.

Let’s cut to the chase. The short answer is yes, voice cloning is legal.

But—and this is a massive, flashing neon "but"—it is legal only if you have explicit, written consent and you are playing strictly by the transparency rules. If you are cloning a voice without permission, even if you think it’s for a "harmless" meme, a non-profit parody, or a quick internal demo, you are walking blindfolded into a minefield.

Rewind three years. The audio landscape was the Wild West. It was chaos. You could clone a celebrity, have them endorse a rug-pull crypto scam, and when the cease-and-desist letter showed up, you could just shrug and claim "satire."

Those days? They are dead and buried.

Welcome to 2026. The legal framework hasn't just caught up to the technology; it has tackled it to the ground. Ignorance is no longer a defense; it’s a liability. Thanks to a slate of aggressive federal acts and state statutes, your voice is now recognized as biometric property. Stealing it is no different than hot-wiring a car.

If you are a creator, a marketer, or a business owner looking to use this tech without getting served a subpoena, your first move is abandoning the shady "free" sites. You need to be using ethical voice cloning tools that prioritize consent verification.

Here is the unvarnished truth on how to stay out of court in 2026.

The Federal "NO FAKES" Act: The New Law of the Land

For decades, copyright law was the wrong tool for the job. It was a dull knife trying to cut a steak.

Why? Because copyright protects recordings, not voices. If you happened to sound exactly like Morgan Freeman and you recorded an audiobook, copyright law didn't care. It was your recording. That legal loophole allowed AI to flourish unchecked for years.

That loophole slammed shut with the NO FAKES Act (Nurture Originals, Foster Art, and Keep Entertainment Safe Act).

This isn't a suggestion. It isn't a guideline. It is the dominant federal framework. The Act establishes a federal property right in a person's voice and likeness. Before this passed, lawyers had to rely on a messy patchwork of state-level "Right of Publicity" laws. If you got sued, it mattered whether the server was in Florida or France. Now? It is a federal issue.

If you create a digital replica of someone's voice without authorization, you are violating their federal property rights. Period.

The Liability Shift

The most critical shift here is who gets the blame. In the past, platforms like YouTube or X (formerly Twitter) could hide behind "Safe Harbor" provisions. They claimed they were just neutral bulletin boards, not responsible for what users posted.

The NO FAKES Act pierces that shield. Both the creator of the clone and the platform hosting it can be held liable for unauthorized digital replicas. This is why you see platforms banning users so aggressively now. If you want to read the fine print—and you really should—the NO FAKES Act Text creates a clear path for litigation against anyone trafficking in unauthorized voice models.

The "TAKE IT DOWN" Act & Deepfake Regulations

While the NO FAKES Act handles the property rights (the "who owns it" part), the TAKE IT DOWN Act (Tools to Address Known Exploitation) handles the cleanup.

Signed into law by President Trump, this legislation was a direct response to the painfully slow reaction times of major social platforms during the deepfake crisis of 2024. Back then, if a deepfake of a CEO or a pop star went viral, it could stay up for days while legal teams argued over Terms of Service violations. By the time it was removed, the damage was done.

The TAKE IT DOWN Act changed the physics of the internet. It mandates the immediate removal of non-consensual deepfakes upon notification. It forces platforms to stop being passive hosts and start being active gatekeepers.

The speed at which these laws have been implemented is staggering. We went from zero regulation to full enforcement in under 24 months.

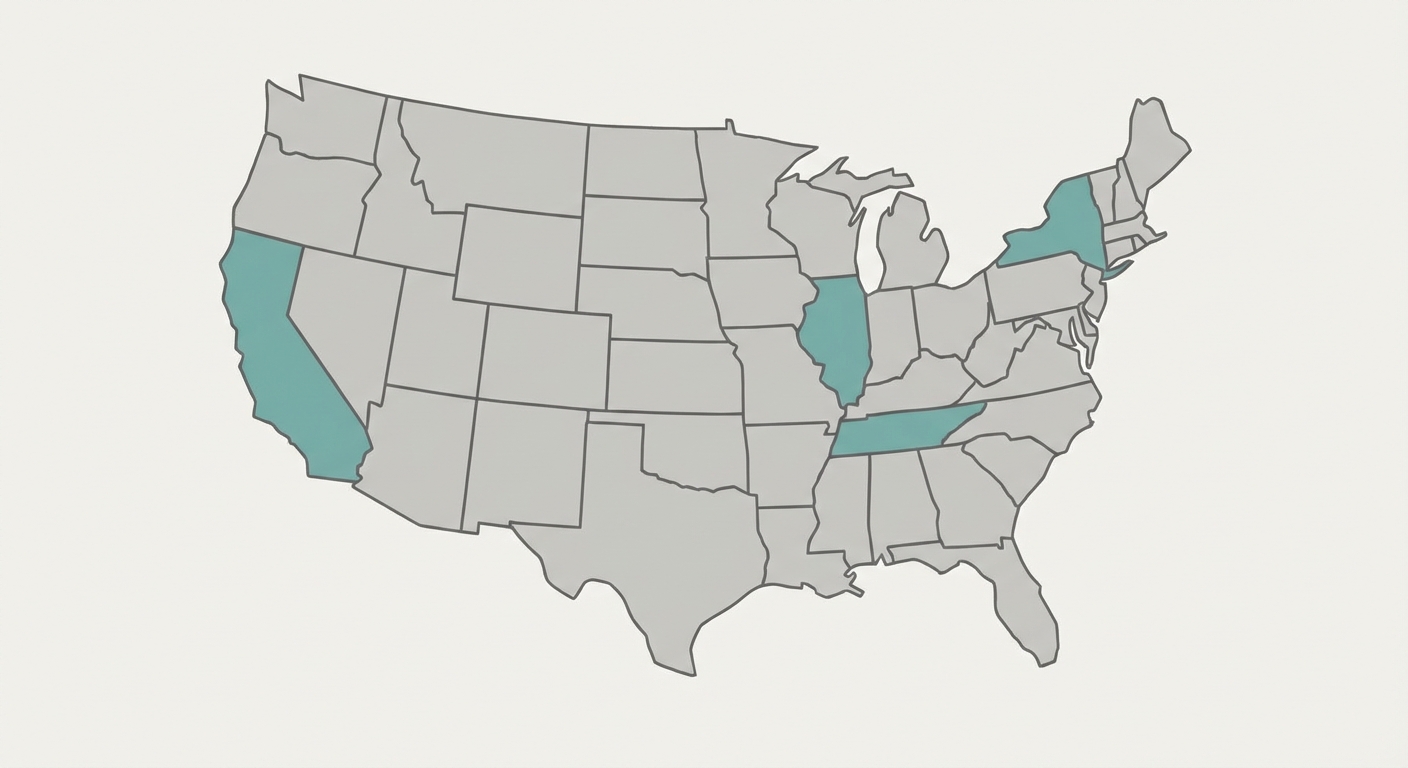

State-Level Protections: Why California and New York are Different

Here is where it gets tricky. Federal law sets the floor—the bare minimum protection. But states? They set the ceiling.

If you are operating in California, New York, or Tennessee, the rules are significantly stricter. These states have robust "Right of Publicity" statutes that treat your voice as a commercial asset that survives even after death.

Tennessee led the charge with the ELVIS Act, specifically designed to protect their massive music industry. But for most tech companies and content creators, California is the state to watch.

California’s AB 853 (Transparency)

California didn't just ban theft; they mandated transparency. The California AI Transparency Act (AB 853) requires that any AI-generated content used in a commercial or political context must be clearly labeled.

You cannot have an AI agent call a customer without announcing it's a bot. You cannot have an AI voice narrate an ad without a disclosure.

This isn't just about watermarking the metadata for the nerds to find; it’s about consumer awareness. According to the California AI Transparency Act (AB 853), failing to label AI content is now considered a deceptive trade practice.

Think about that. If you are running a faceless YouTube channel or a marketing campaign, you need to know where your audience lives. If you deceive a consumer in Sacramento, you are on the hook in Sacramento.

Case Precedents: How Courts are Ruling in 2026

Laws are just theory until a judge bangs a gavel. In 2026, we have seen the first major rulings that define how these laws actually work in the real world. The days of theoretical debate are over.

Lehrman & Sage v. Lovo, Inc.

This is the case that terrified every AI startup in Silicon Valley.

Lovo, a voice cloning company, was sued by voice actors Paul Lehrman and Linnea Sage. The core issue wasn't just that their voices were cloned—it was how the data was obtained. The actors had provided voice samples for "academic use only" years prior. Somehow, those samples allegedly ended up in a commercial training dataset.

The court's ruling in the Lehrman & Sage v. Lovo, Inc. Decision established that training data is not a lawless void. You cannot strip-mine voice data under false pretenses. This case established voice as "biometric property" in the context of contract law.

The lesson: If you use a "free" voice sample that you found online, you are likely inheriting the legal liability of whoever stole it first.

Scarlett Johansson vs. OpenAI

While this was settled out of court, the precedent here is the "Soundalike" doctrine.

OpenAI didn't use Johansson's exact voice. They used a voice that sounded eerily similar ("Sky") after she had refused to license hers. The legal takeaway for 2026 is clear: Intent matters.

If you cannot get the rights to a celebrity's voice, you cannot simply hire a soundalike (or train an AI on a soundalike) to mimic them. If the intent is to evoke their persona for commercial gain, you are liable. You can't loophole your way out of identity theft.

Commercial vs. Personal Use: Where is the Line?

This is where most content creators get into trouble. There is a persistent myth that as long as you aren't selling the audio directly, it's "Fair Use."

In 2026, that definition has narrowed dramatically.

The "Parody Defense" is Dead

The classic excuse—"It's just a meme, bro"—doesn't hold water if the platform monetizes the content.

If you clone a politician saying something absurd and post it on X or TikTok, and that platform runs ads against your content, money is changing hands. Under the NO FAKES Act, if that digital replica causes "reputational harm" or confusion, the parody defense collapses. If your fake audio tricks the audience, it's not a parody; it's disinformation.

Commercial Safety

If you are a business, your risk tolerance should be zero.

Using an unauthorized clone for an internal training video, an IVR system, or a social media ad is a guaranteed way to get sued. To avoid litigation, businesses strictly need to rely on a licensed AI voice generator that guarantees royalty-free clearance.

Think of it this way: Paying for a license is infinitely cheaper than paying a settlement.

Copyright of the Output

Who owns the song?

If you use AI to generate a track with your own lyrics but a cloned voice, the ownership is split. The US Copyright Office: AI Guidelines clarify that AI-generated recordings generally lack copyright protection because they lack "human authorship."

However, you still own the copyright to the underlying script or lyrics. You own the song on paper, but you don't own the specific audio file generated by the machine. It's a weird nuance, but an important one.

Decision Guide: Can I Clone This Voice?

Navigating these laws can be a headache. To simplify it, follow this logic flow before you hit "generate."

The "Zombie" Clause: Post-Mortem Voice Rights

One of the most morbid yet necessary developments in 2026 law is the handling of the deceased.

Can you clone your late grandfather to read a bedtime story to your kids? Generally, yes—that is personal use. It stays in your house.

Can you clone a deceased celebrity to star in a new commercial? Absolutely not. Not unless you pay the estate.

Most statutes now include post-mortem protections. The "Right of Publicity" doesn't die with the person; it transfers to their estate like a house or a bank account. Unauthorized reanimation of a deceased public figure's voice is a violation of the estate's property rights. Estates are now becoming incredibly aggressive in protecting the digital legacy of stars who passed away decades ago.

How to Clone Voices Legally (Compliance Checklist)

If you want to use this technology without looking over your shoulder, follow this compliance workflow.

- Get Written Consent: Verbal agreements are worthless in court. You need a "Voice Model Release Form" that specifically grants permission for AI training and synthesis. Standard recording contracts from five years ago do not cover AI.

- Verify the Source: Never download random clips from YouTube or "abandonware" sites. If you can't trace the chain of custody, don't use the data. Data laundering is real.

- Use Transparent Labeling: Label the audio as "AI-Generated." In the EU and California, this is mandatory. In other places, it's just good insurance against fraud claims.

- Choose the Right Tools: Avoid "free" tools that hide data-scraping clauses in their Terms of Service. While there are free instant voice cloning tools, enterprise users should ensure the platform offers legal indemnification. You want a partner that takes responsibility for their model, not one that offloads the risk to you.

Frequently Asked Questions

Can I legally clone a celebrity's voice for a non-profit meme?

Likely no. Under the 2026 NO FAKES Act, unauthorized digital replicas that cause confusion or harm are actionable, regardless of profit. The "parody" defense is much narrower now. If it goes viral or defames the subject, you're in trouble.

Who owns the copyright to an AI-generated song featuring my voice?

You own the underlying lyrics and composition, but the AI audio recording itself generally cannot be copyrighted. However, you retain the "Right of Publicity" over your voice likeness. This means no one else can use that track commercially without asking you first.

Is it illegal to use voice cloning for customer service agents?

It is legal, but under the 2025 AI Transparency Acts (CA/EU), you strictly must disclose to the caller that they are speaking with an AI agent. Failing to disclose this is considered a deceptive trade practice.

What is the penalty for unauthorized voice cloning?

Penalties under the NO FAKES Act start at $5,000 per violation. But that's just the starter. It can escalate into the millions if actual damages (lost income, reputational harm) or viral reach can be proven. Courts are also increasingly awarding punitive damages to deter future theft.