How to Convert Text into Natural-Sounding Speech

TL;DR

- Shift from basic robotic bots to high-quality neural voice acting

- Understand Neural TTS deep learning for better rhythm and prosody

- Optimize scripts specifically for ear-friendly, natural-sounding audio production

- Leverage the $6 billion market shift toward studio-grade AI narration

Converting text to speech that doesn't sound like a sci-fi villain isn't about picking the right software. It's about the workflow.

Stop hitting "Generate" and praying. If you want audio that actually fools the ear, you have to stop treating AI like a read-aloud bot and start treating it like a high-maintenance voice actor. It needs direction. It needs a script that makes sense. It needs you to tell it when to breathe.

The era of the "GPS robot" is dead. Buried. In 2026, if your audio sounds synthetic, you aren't just annoying your audience—you're losing them. We’ve moved past novelty into necessity. Modern AI voices can whisper, scream, crack with emotion, and convey empathy. This isn't just a toy for TikTok creators anymore; it’s a business standard.

The money follows the quality. With the Global Text-to-Speech Market Report projecting the industry to hit nearly $6 billion by 2026, the bar is on the floor no longer. It's in the stratosphere. Audiences expect studio-grade narration, whether they're watching a corporate training module or a three-hour YouTube video essay.

This guide is your production manual. We aren't just listing tools. We’re breaking down the invisible work—the craft—that makes AI sound indistinguishable from a human being.

What is Neural TTS (and Why Should You Care)?

To master the tool, you have to understand the engine.

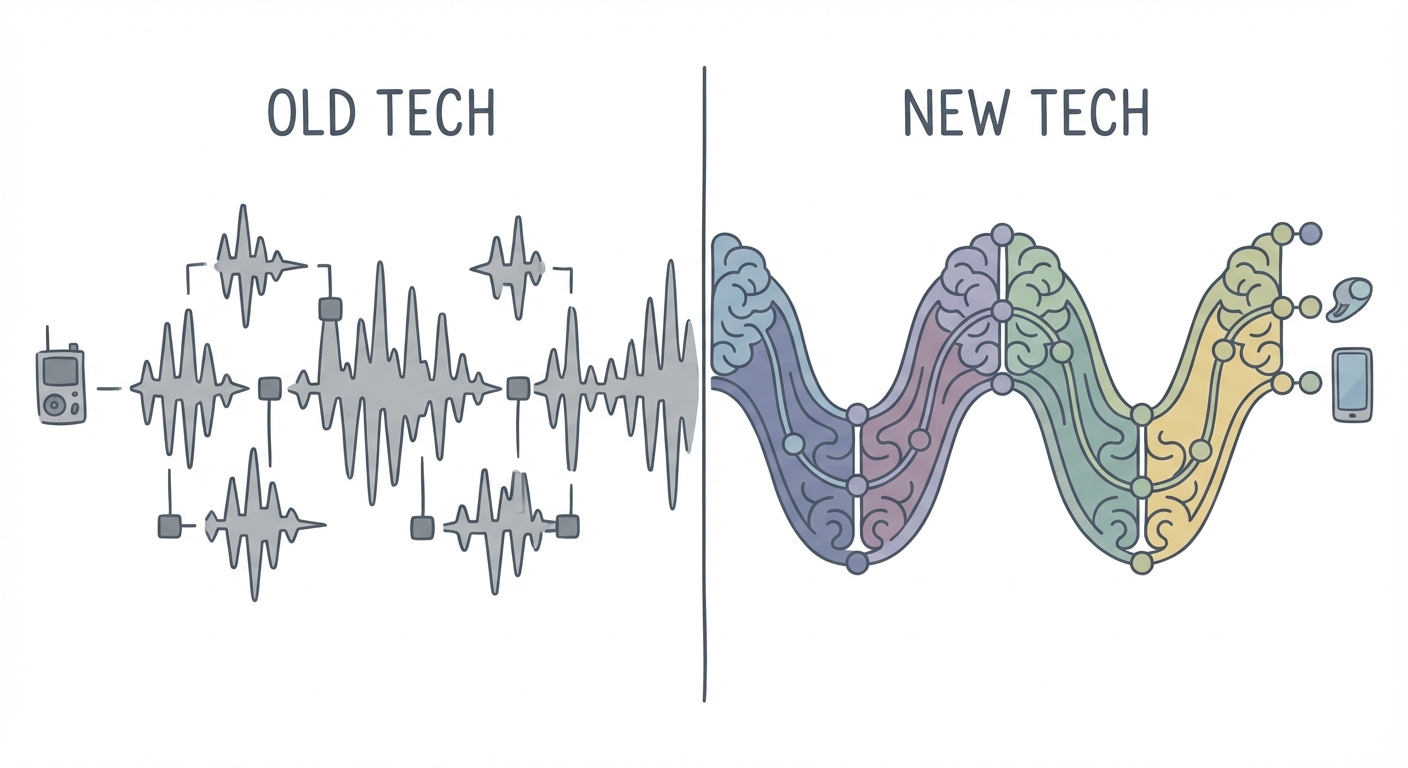

Old-school text-to-speech (TTS) was "Concatenative." Think of it like a ransom note. You cut letters out of a magazine and glue them together. That’s what early TTS did with audio samples. It spliced pre-recorded sounds, resulting in that choppy, jagged rhythm we all associate with Windows 95.

Modern AI uses "Neural TTS." This is deep learning. Instead of gluing sounds together, a neural network reads the room. It analyzes the entire sentence to understand context before it speaks a single word. It predicts prosody—the rhythm, stress, and musicality of speech. It knows that "record" (the noun) sounds different from "record" (the verb) because it understands where the word sits in the sentence.

Want to geek out on the tech? Read our deep dive on neural text-to-speech architectures. It explains why a neural network knows how to "breathe" while a standard engine just marches forward until it hits a wall.

Step 1: The "Pre-Processing" Phase – Scripting for the Ear

Here is the secret nobody tells you: AI voices are terrible at reading bad writing.

Most users fail because they paste raw text—stuff designed for the eye—directly into a generator. But writing for the ear is a completely different beast. When you read silently, your brain skips over awkward phrasing. When you listen? Every clunky sentence sticks out like a sore thumb.

Before you generate a single second of audio, you need to "pre-process" your script.

Phonetic Spelling

AI models are smart, but they get confused by proper nouns, acronyms, and niche jargon. If you type "Kveeky," a standard model might say "Kuh-vee-kee."

Don't hope it gets it right. Force it.

- Don't type: "Kveeky"

- Do type: "Che-vk" (or whatever phonetic combination creates the sound you need).

Treat the input box like a stage direction. If the AI is rushing through a complex name, break it down phonetically.

Punctuation Hacks

Punctuation is your brake pedal. It controls the pacing.

- Commas (,): Use these for micro-breaths. If a sentence feels like a race car out of control, insert commas where a human would naturally take a sip of air.

- Ellipses (...): These create a trailing thought or a hesitation. It adds a layer of "thinking" to the voice.

- Periods (.): These are hard stops. Use them to break up run-on sentences. If your AI sounds robotic, it’s usually because the sentence is too long and the pitch has flattened out.

Step 2: The "Human-in-the-Loop" Workflow

The biggest lie in AI audio is the "one-click" miracle. It doesn't exist.

Great audio is a cycle of generation and refinement. The best producers use a "Human-in-the-Loop" workflow. You generate a baseline, listen with a critical ear, and then go back to tweak the dials.

The process looks like this:

- Generate: Run your pre-processed script.

- Listen: Close your eyes. Seriously, close them. Where does the voice sound bored? Where is it rushing?

- Adjust Speed: Most AI voices speak like they had too much coffee. Slowing them down by 5-10% often adds gravitas and clarity.

- Add Pauses: Manually insert silence between distinct ideas.

- Regenerate: Rinse and repeat until you cross the uncanny valley.

Step 3: Advanced Polishing with SSML (The Cheatsheet)

If punctuation is the hammer, SSML (Speech Synthesis Markup Language) is the scalpel.

Think of SSML as HTML for voice. It allows you to wrap specific words or phrases in code to tell the AI exactly how to perform them. For total control, you can use Speech Synthesis Markup Language (SSML) to dictate every breath.

But you don't need to memorize the documentation. You just need these essentials:

The "Copy-Paste" Cheatsheet

The Dramatic Pause:

<break time="500ms"/>Use this to create tension before a reveal.The Emphasis:

<emphasis level="strong">This is important.</emphasis>Use this to make the AI punch a specific keyword.The Whisper:

<amazon:effect name="whispered">I shouldn't be telling you this.</amazon:effect>(Note: Syntax varies by platform, but the concept is universal).

Using these tags prevents the "monotone drone" that plagues lazy AI content. It forces the engine to vary its pitch and volume. That variation? That's the essence of being human.

Choosing the Right Tool: Features That Matter in 2026

Not all engines are built the same. In 2026, you shouldn't care about the number of voices a tool has. You should care about the control it gives you over them.

Emotional Intelligence

Look for tools that offer "styles." A news report needs a different delivery than a bedtime story. Top-tier tools allow you to select "Newscaster," "Angry," "Cheerful," or "Terrified" modes. This isn't just a filter; it fundamentally changes the prosody of the generation.

Voice Cloning

Sometimes stock voices aren't enough. You want your brand voice. Cloning technology has advanced to the point where 30 seconds of audio can create a high-fidelity replica. This is crucial for maintaining brand consistency across thousands of videos without burning out your human talent.

The Balanced Solution

If you're looking for a tool that balances emotion with ease of use, try Kveeky's AI Voice Generator. It is designed specifically to handle the nuances of video production, bridging the gap between raw text and emotive performance.

Budget Options

For creators on a budget, checking out free text-to-speech software for YouTube is a great starting point. While free tools often lack the granular SSML controls of premium platforms, they are excellent for prototyping and simple narration.

Top Use Cases for Natural-Sounding Speech

Why go through all this trouble? Because the utility of realistic audio extends far beyond just saving money on voice actors.

Video Production

Faceless YouTube channels are a massive industry. The difference between a channel that grows and one that stagnates is often the voiceover quality. Viewers will tolerate bad visuals, but they will click off immediately if the audio hurts their ears.

Accessibility and Time-on-Site

Publishers are increasingly having articles read aloud to boost time-on-site. Offering an audio version of your blog post makes your content accessible to the visually impaired and convenient for commuters. It turns passive readers into active listeners.

Corporate Training

Let's be honest: nobody reads the employee handbook. But they might listen to an engaging, podcast-style breakdown of it. Replacing boring PDFs with engaging audio courses improves retention rates and makes compliance training less of a chore.

Ethical Considerations: Clones and Copyright

We cannot talk about AI voice without addressing the elephant in the room: Ethics.

With great power comes great responsibility. The rise of deepfakes has made voice security a critical issue. Enterprise users should prioritize tools that adhere to SOC 2 standards, ensuring that their data (and their cloned voices) are encrypted and secure.

Furthermore, distinguish between "Deepfakes" and "Licensed AI." Ethical platforms pay voice actors for the data used to train their models. When you use a reputable tool, you are using a licensed asset. When you use a shady, free GitHub repository, you might be using a pirated voice. Stick to platforms that prioritize consent and compensation.

Frequently Asked Questions

Why does my AI voice still sound robotic?

It usually comes down to three things: lack of pitch variation, sentences that are too long (run-ons), or a lack of "breathing" pauses. Try breaking your long sentences into two shorter ones. Use commas liberally to force the AI to pause and reset its pitch.

Can I monetize AI-generated voiceovers on YouTube?

Yes, but it depends on the commercial rights of the tool you are using. Free plans often require attribution or forbid commercial use. Always check YouTube's monetization policies and your tool's terms of service to ensure your AI content complies.

How do I fix the pronunciation of names in text-to-speech?

Use phonetic spelling. If the AI mispronounces "Siobhan," type "Shi-von." Many advanced editors also have a "Find and Replace" or "Pronunciation Dictionary" feature where you can use the International Phonetic Alphabet (IPA) to permanently fix specific words.

Is it legal to clone a celebrity's voice?

Generally? No. Unless you have their explicit permission. Using a celebrity's voice for commercial gain without consent violates their right of publicity and can lead to lawsuits. It is safer—and more ethical—to use stock voices or clone your own voice.

Conclusion

Natural speech isn't just about the software you buy; it's about the script you write and the settings you tweak. The difference between a robotic drone and a compelling narrator is often just a few well-placed commas and a 500ms pause.

Don't just copy-paste and hope for the best. Be a director. Take your first script, apply the punctuation hacks and SSML tags we covered, and run it through Kveeky’s generator. Hear the difference between "reading" and "speaking."