Top Voice Cloning Solutions: Instant Voice Duplication

TL;DR

- Explains the shift from robotic GPS voices to realistic neural synthesis

- Details how zero-shot technology clones voices using 10-second samples

- Breaks down the technical pipeline from encoder to vocoder output

- Analyzes the $9 billion market growth for AI voice cloning

- Compares instant duplication versus professional-grade studio cloning

Remember when AI voices sounded like a constipated GPS navigator? Those days are dead.

In 2026, the tech doesn't just read words. It captures timbre, cadence, and that subtle, gritty "grain" that makes a human voice sound, well, human. If you’re a creator, developer, or brand, cloning a voice isn't some futuristic parlor trick anymore—it’s a survival mechanism. It’s the only way to scale content without burning out your talent or destroying your vocal cords.

The money follows the utility. According to market size reports by Mordor Intelligence, this industry is on track to smash past $9 billion by 2031. Why? Because the bottleneck is you. You cannot physically record every personalized marketing blast, every localized video dub, or every NPC line for a sprawling RPG.

Instant voice cloning is the bridge. It turns seconds of reference audio into hours of studio-quality content. But let’s be real: not all clones are created equal. Some capture the soul; others just hit the pitch.

This guide cuts through the noise. We’re ranking the top solutions based on latency, emotion, and localization, and—crucially—teaching you the forgotten art of recording an input sample that doesn't suck.

1. How Does Instant Voice Cloning Actually Work?

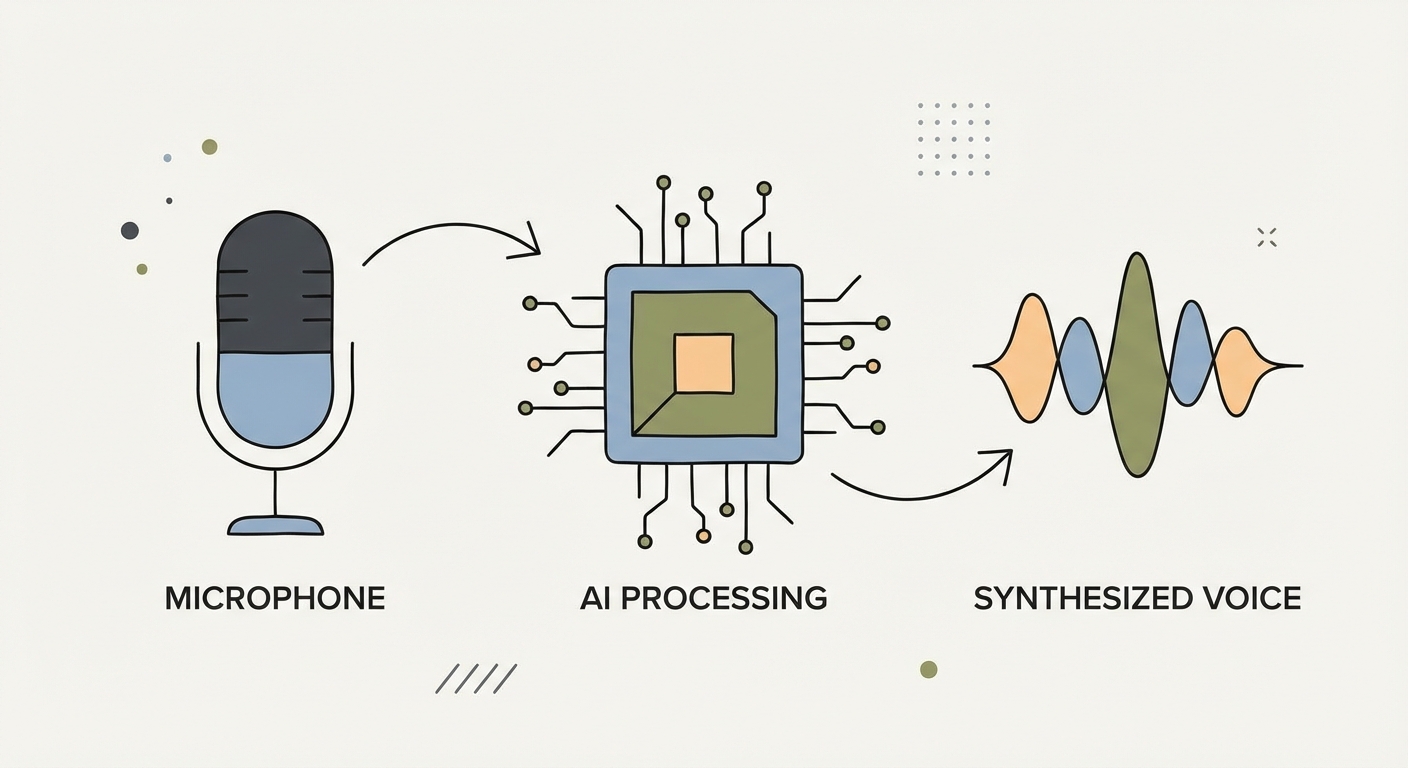

To understand why some tools sound uncanny and others sound like a B-movie robot, look at the engine. We’ve finally ditched "concatenative synthesis"—which was basically gluing pre-recorded sounds together like a ransom note made of magazine clippings.

Today, we run on Neural Audio Synthesis. Deep learning. It understands the physics of sound production.

The game-changer is Zero-Shot technology. Used to be, you needed hours of data to train a model. Now? Zero-Shot voice cloning lets the AI analyze a unique "voice print" (the mathematical signature of your vocal cords) in 3 to 10 seconds. It doesn't "learn" to speak like you over a week; it infers how you should sound based on massive datasets of human speech it already knows.

The pipeline usually looks like this:

- The Encoder: You drop a 30-second sample. The AI ignores the words and extracts the "embedding"—the DNA of the voice (tone, pitch, accent, breath).

- The Synthesizer: You type your text. The AI calculates the syntax and the intended emotion.

- The Vocoder: The decoder smashes your text input together with the voice embedding to spit out a raw waveform that sounds exactly like you.

2. Instant vs. Professional Cloning: Pick Your Lane

The market has split in two. You have two choices, and picking the wrong one will either burn your budget or waste your time.

The "Instant" Clone (Zero-Shot)

- The Commitment: 10–30 seconds of audio.

- The Reality: Upload a file. Five seconds later, that voice can say anything.

- The Use Case: Speed. Social media clips, memes, rough drafts, and personalized sales outreach where "good enough" is perfectly fine.

- The Catch: It struggles with drama. If your sample is calm, making the clone scream "Fire!" will sound weirdly polite.

The "Professional" Clone (Fine-Tuned)

- The Commitment: 30 minutes to 3 hours of dedicated script reading.

- The Reality: The AI trains on only this data for hours or days.

- The Use Case: Brand ambassadors, audiobooks, and permanent virtual assistants. This is "uncanny valley" territory where the AI is indistinguishable from the person.

- The Catch: It’s expensive, slow, and eats computing power for breakfast.

A Note on Latency: Building a bot that talks to humans on the phone? You are fighting a war against milliseconds. You need Instant Voice Cloning solutions that hit under 200ms. Anything slower, and the conversation feels laggy and awkward.

3. Top Voice Cloning Solutions for 2026 (Ranked by Use Case)

Don't hunt for "the best" tool. Hunt for the one that fixes your specific headache. Here is the landscape right now.

A. The Globalist: Rask AI / Fish Audio (Best for Localization)

Need a CEO to deliver a keynote in English, then seamlessly switch to fluent Japanese and Spanish while keeping their original timbre? This is your stop.

- The Superpower: Cross-lingual synthesis. They don't just translate the text; they translate the voice.

- Why it matters: You unlock global markets without hiring a dozen dubbing actors. The AI handles the lip-sync (in video tools) and the prosody shifts required for different languages.

B. The Emotional Artist: ElevenLabs (Best for Granular Control)

For storytellers and game devs, a flat delivery is a death sentence. ElevenLabs is still the heavyweight champ because of "Speech-to-Speech" and emotional tagging.

- The Superpower: You direct the AI. Use tags or act out the line yourself in a reference file to force the clone to whisper, shout, or crack with emotion.

- The Cost: Pricing is usually character-based. It gets pricey for long-form, but it’s currently the industry benchmark for "acting."

C. The User-Friendly Creator: Kveeky (Best for Ease of Use)

Not everyone wants to mess with API keys or complex sliders. Kveeky is the accessible solution for creators who want pro results without a degree in audio engineering.

- The Superpower: Balance. High-fidelity cloning that rivals the "pro" tools, wrapped in an interface built for speed. Perfect for YouTubers and marketers who need to turn a script into a voiceover in minutes.

- Integration: It hooks your cloned voice directly into a powerful Text to Speech Generator, letting you save presets for recurring characters.

D. The Developer's Choice: Play.ht / Resemble AI (Best for API & Latency)

Coding an app where the voice needs to generate in real-time? You need a headless solution.

- The Superpower: Low latency and security. These platforms offer robust APIs to inject voice generation straight into games or support software.

- Security: Leaders in "watermarking," ensuring synthesized audio is trackable—a must-have for enterprise compliance.

4. The "5-Minute Closet Recording Guide": How to Get Studio Quality

Here is the secret the software companies don't tell you: 90% of "bad" AI voices are actually just bad input audio.

Record your sample on a laptop mic in a tiled kitchen? The AI clones the echo. It clones the fridge hum. When you generate text later, that echo gets baked into every single word. It sounds metallic. It sounds robotic.

You don't need a $5,000 studio. You need physics.

The "Golden Sample" Checklist:

- Kill the Reverb (The Pillow Fort): Sound waves bounce off hard walls. That's echo. Open your closet, push the clothes aside, and stick your head in there. Clothes absorb sound. No closet? Build a fortress out of pillows around your mic. A "dead" sound is a perfect sound for AI.

- The "Hang Ten" Rule: Mic placement matters. Make a "shaka" sign (thumb and pinky out). Place your mic that distance from your mouth (about 6 inches). Too close? You get popping sounds. Too far? You get room noise.

- Emotional Consistency: Don't read like a robot. Read with the exact energy you want the clone to have. If you want a hype-man voice, speak with hype in the sample. The AI clones your vibe, not just your vocal cords.

- The Script Matters: Read something with variety. Don't count to 100. Read a news paragraph or a story to give the AI a full buffet of vowels and consonants.

5. Troubleshooting: Why Does My Clone Sound Robotic?

You followed the steps, but the result still sounds like a 1980s answering machine. Here’s what probably went wrong:

- Background Noise: AI is sensitive. It might think the low hum of your AC is part of your voice's "texture." When it tries to replicate that on new words, you get weird digital artifacts. Fix: Use Adobe Enhance or Audacity to clean the noise before uploading.

- Speed Kills: If you rap your sample at 200 words per minute, the AI will try to keep that pace. Feed it a slow, dramatic sentence later, and it will sound jittery because it's stretching a "fast" model over a "slow" line. Fix: Speak at a moderate, conversational pace.

- Over-Processing: Don't EQ or compress your audio to death before uploading. You want raw, clean signal. If you artificially boost the bass, the AI tries to replicate that boost and the result is a muddy mess.

6. Ethics & Safety in 2026

We can't talk about this tech without addressing the elephant in the room: Consent.

The tech is neutral; the application is where it gets messy. In 2026, the "Right of Publicity" is a massive legal concept. You cannot legally clone a celebrity, a politician, or even a local influencer for commercial use without their permission. Period.

Platforms are fighting back. As noted by UNESCO regarding deepfakes and digital trust, the erosion of trust is a real societal risk. That's why reputable platforms now use invisible "audio watermarking." It’s a digital signature that proves audio was AI-generated, protecting both the creator and the listener.

At Kveeky, the stance is simple. We empower creators, not impersonators. Users often ask, "Can AI tools duplicate my voice?" The answer is yes—but it should only ever be your voice, or a voice you have the rights to use.

Frequently Asked Questions

What is the difference between "Instant" and "Professional" voice cloning?

Instant cloning takes a short sample (about 30 seconds) to create a usable voice model immediately—great for memes and short content. Professional cloning needs way more data (30+ minutes) and processing time, but creates a high-fidelity model capable of nuanced emotion, usually for audiobooks.

Is voice cloning legal in 2026?

Yes, if you have consent. Cloning your own voice is fine. Cloning a celebrity or another person without their explicit permission for commercial purposes violates "Right of Publicity" laws and will get you sued.

How much audio do I need to clone my voice?

For modern "Zero-shot" tools, you need a clear, noise-free sample of 10 to 30 seconds. For professional custom models that need fine-tuning, be prepared to record 30 minutes to 3 hours of high-quality audio.

Can I use a cloned voice for YouTube monetization?

Yes, YouTube allows monetized content using AI voices. But you must own the rights to the voice. Using a cloned celebrity voice without permission can trigger copyright strikes or demonetization under "misleading content" policies.

Why does my cloned voice sound robotic?

It's usually the input. Background noise, room echo (reverb), or a "monotone" reading style in your original recording prevents the AI from capturing the natural warmth of a human voice. Clean up the source, fix the result.