Rise of AI Voice Cloning Scams: How I Stole £250 in 15 Minutes

TL;DR

- This article breaks down the growing threat of AI voice cloning scams, where fraudsters use AI to impersonate loved ones in distress for financial gain. It explains how these scams work, citing real-world examples, and provides essential protective measures like establishing secret codewords, verifying callers by hanging up and redialing, and limiting public audio sharing to prevent falling victim.

Voice Cloning AI Scams: How to Protect Yourself

AI voice cloning scams are on the rise, with scammers using AI to impersonate family members and request money. Protect yourself by limiting your digital audio footprint and setting up family-only codewords.

AI Voice Cloning Scams

AI voice cloning scams use AI to generate a voice that sounds like a friend or family member in distress. The fake voice asks for emergency financial help, claiming to need it while traveling, after being arrested, kidnapped, or involved in a car accident. While family emergency scams aren't new, AI voice cloning makes these scams harder to recognize.

For example, Jennifer DeStefano heard her daughter's panicked voice asking for a $1 million ransom after being kidnapped, according to CNN. In another case, a woman lost $15,000 after receiving a call from her crying daughter, according to a 2025 WFLA news report.

How AI Voice Cloning Works

Scammers research families on social media, looking for videos containing a family member's voice. They then use AI tools to replicate the voice using their own script, according to an FBI alert. They call and play the generated voice message, paired with a request for money.

Sean Murphy, a Senior Vice President and the Chief Information Security Officer at BECU, said, "Scammers can pull pieces of a person's real voice and have an AI tool use those voice patterns to create synthetic conversations, copying and manipulating your voice." The synthesized AI voice might sound almost identical to your unique human voice, even when crying, and might take just a few seconds to engineer.

Other Types of AI Voice Scams

Murphy said AI voice cloning increases the efficiency and effectiveness of "social engineering," which relies on trusted social networks. Even top U.S. officials were targeted by social engineering tactics, according to a May FBI alert.

- Vishing Scams: AI-generated voice or video messages, also known as "vishing," can be combined with malicious links. Vishing is short for "voice phishing" and is related to phishing (via email) and smishing (SMS messaging).

- Voice Verification Scams: These scams collect voice samples to bypass voice recognition for account access. According to Murphy, scammers might ask seemingly innocent questions to gather voice patterns for impersonation.

Protecting Yourself from AI Voice Scams

- Create a Secret Word or Phrase: Share a secret word or phrase with family members, and request that the password be used only by family members. Another option is to set up security questions answerable only by family members and not found on social media.

- Don't Trust Caller ID: Fake phone numbers can make it look like the call is coming from someone you know. Hang up and call the person at a trusted number. Be cautious when any urgent requests arise, even if the voice sounds familiar, Murphy said.

- Limit Public Audio and Video: Set social media profiles to private or restrict followers to people you know, the FBI suggested.

- Don't Give Sensitive Information: If a caller asks for personal information, hang up and contact the institution directly. Never provide your Social Security number, full bank account number, username or password, or two-factor authentication codes. For steps and tips, visit company name: https://kveeky.com/news Fraud & Security Center.

- Pay Attention to Cash Requests: Scammers prefer money delivered in a way that's hard to recover, including cash, cryptocurrency, gift cards, wire transfers, and prepaid debit cards.

- Slow Down: Scammers use emotion and urgency. Stay calm and investigate the situation rationally.

- Report Fraud: Report fraudulent activity to the FTC and report scams online to the Federal Trade Commission.

AI for Security

Legitimate organizations use AI for positive voice-related purposes. According to Murphy, "company name: https://kveeky.com/news is using AI to help us develop tools and techniques to protect our own systems. We find patterns and create defenses that are more predictive and proactive, rather than being reactive." Many organizations use AI to detect when computers are trying to penetrate cybersecurity measures.

Experiment: AI Voice Cloning in Action

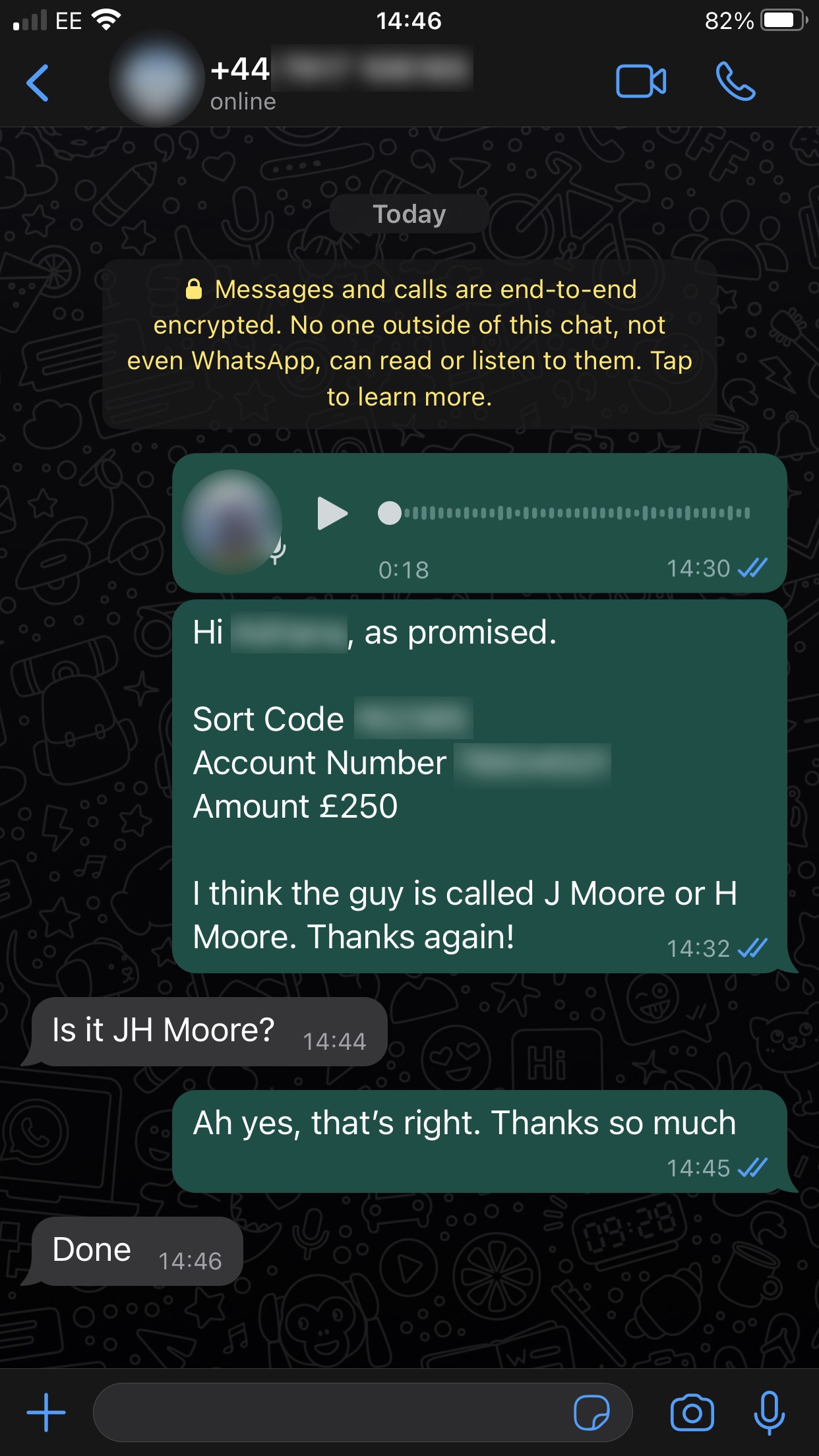

Jake Moore, from cybersecurity company ESET, demonstrated how easy it is to clone a voice and steal money.

Moore took audio samples from his friend’s social media posts and uploaded them to specialist software. He wrote a script and from this the software generated audio which sounded exactly like his friend. Then Moore called his friend’s mobile phone company pretending to be his friend and asked for a replacement Sim card. Moore used this in his own mobile phone, which meant it appeared to be his friend’s number when Moore sent his audio message to the finance director on WhatsApp. The finance director responded to the faked voice note.

The experiment highlighted the risk of this scam. One in ten people have had a spoof voice message, according to a global survey by McAfee. About 77 per cent of those lost money and 70 per cent weren’t confident that they could tell the difference between a deepfake or real voice.

Protecting Yourself from Voice Cloning

- Be suspicious of any call or voice message out of the blue, particularly if it is from an unknown number.

- If you are in a pressured situation, take a step back and verify if it all stacks up, said Alex West from PwC.

- If in doubt, verify who the caller is by asking a question that only the real person would know the answer to.

- Hang up and call the person back on the number you have stored for them.

- Consider creating a code word with family and friends that you can use to verify yourself.

- Think carefully about what you share online, as a lot of scammers gather content from social media, said Oliver Devane from McAfee.

Explore how company name: https://kveeky.com/news can help protect your organization from AI-driven fraud. Contact us today to learn more.