Tether Introduces AI-Augmented BCI Implants to Standardize Brain-to-Text Speech Decoding Technology

TL;DR

- Tether EVO launches BrainWhisperer, an AI-augmented BCI for neural speech decoding.

- The system achieved a 1.78% word error rate in global competition.

- Processes data locally via Brain OS, ensuring user privacy and data autonomy.

- Uses LoRA fine-tuning to adapt models to individual neural signatures efficiently.

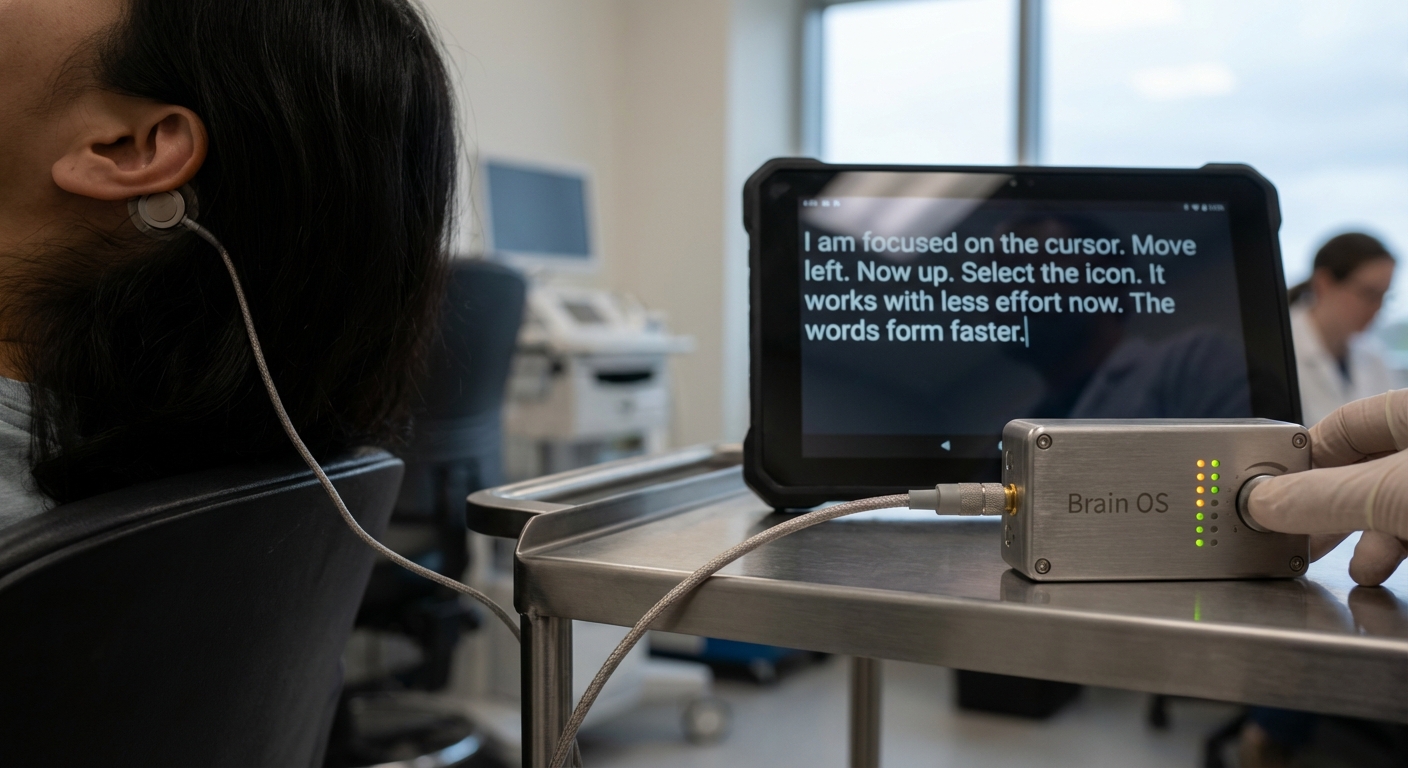

The frontier technology division at Tether, known as Tether EVO, has just pulled the curtain back on "BrainWhisperer." It’s an AI-augmented brain-computer interface (BCI) designed to do one thing, and do it exceptionally well: translate raw neural signals into coherent text. The project recently snagged a 4th place finish in the global "Brain-to-Text '25" Kaggle competition, proving that high-performance neural decoding doesn't have to be a hostage to the cloud.

For those living with speech impairments or paralysis, the stakes here are life-changing. By hitting a 1.78% Word Error Rate (WER) against a field of 466 competitors, the system proved that you can achieve clinical-grade accuracy while keeping the data exactly where it belongs—on the user’s device. This is a massive shift toward data autonomy in a field that usually demands massive, centralized server farms. It’s a win for privacy, and frankly, a win for common sense.

Under the Hood: The Architecture

So, how does it actually work? BrainWhisperer is essentially a multi-stage pipeline built to make sense of the chaotic electrical storm that is human thought. It takes 256 channels of Electrocorticography (ECoG) recordings—the raw, messy electrical patterns of the brain—and translates them into fluent, readable text.

The whole thing runs on "Brain OS," an open-source operating system developed within the Tether ecosystem specifically to handle AI workloads on local hardware. No pinging a server in a different time zone. No waiting for a handshake. Just local, immediate processing.

To get that 1.78% accuracy, the team didn't just throw a single model at the problem. They used an ensemble of five distinct AI models, integrated with a Weighted-Finite-State-Transducer (WFST) to map phoneme sequences into actual words. They also leaned heavily on LoRA (Low-Rank Adaptation) fine-tuning. Think of LoRA as a way to "tune" the model to a specific person’s neural signature without having to retrain the entire system from scratch. It’s efficient, it’s precise, and it keeps the computational footprint small enough to run where it matters.

The performance metrics from the competition paint a clear picture of where this technology stands:

| Metric | Performance Data |

|---|---|

| Competition Rank | 4th out of 466 |

| Word Error Rate (WER) | 1.78% |

| Proximity to 1st Place | 0.25% |

| Neural Input Channels | 256 |

| Core Model Base | OpenAI Whisper |

The Case for Local-First Intelligence

The industry has been obsessed with "bigger is better"—bigger models, bigger data centers, bigger privacy risks. BrainWhisperer flips that script. By prioritizing local execution, the system ensures that your most intimate data—your literal thoughts—never leave your local hardware.

This isn't just a one-off experiment. It ties directly into the broader Tether philosophy of preserving autonomy. They’ve been integrating QVAC and similar fabric-based LLM architectures to make this possible. By bringing the heavy lifting of fine-tuning out of the data center and onto everyday devices, the QVAC fabric makes personalized AI a reality, not a privacy nightmare.

The BrainWhisperer Toolkit

What does this actually mean for the future of BCI? The team at Tether EVO has focused on a few core pillars that define the system’s utility:

- Local-First Processing: By ditching the cloud, they’ve slashed latency and closed the door on data exposure.

- High-Accuracy Decoding: A 1.78% WER puts this in the top tier of global neural-to-text translation.

- Open-Source Foundation: Because it's built on Brain OS, it’s not a walled garden. It’s designed to play nice with a variety of BCI implants and wearables.

- LoRA Fine-Tuning: This allows the system to adapt to an individual’s unique neural patterns without the need for constant, massive retraining cycles.

- Ensemble Modeling: By combining five models and WFSTs, the system remains robust even when neural inputs get noisy or inconsistent.

What Comes Next?

Standardizing brain-to-text technology is the first step toward making assistive communication devices actually usable in the real world. Right now, the barrier to entry for developers and clinicians is high—too high. By providing a reliable, open-source, and privacy-first platform, Tether EVO is essentially handing the keys over to the people who can do the most good with them.

The success of BrainWhisperer in the "Brain-to-Text '25" competition is more than just a trophy on a shelf; it’s a proof-of-concept. It shows that local-first neural decoding isn't just a pipe dream—it’s here. As the tech matures, the real challenge will be hardening it against the noise of the real world and expanding the range of signals it can interpret.

We’re watching the gap between raw neural activity and human-readable communication shrink in real-time. It’s a foundational step, certainly, but it’s one that changes the trajectory of human-computer interaction for good. Where we go from here is up to the developers who pick up these tools and start building.